I've set LC_ALL and LANG explicitly to be sure that this is not locale

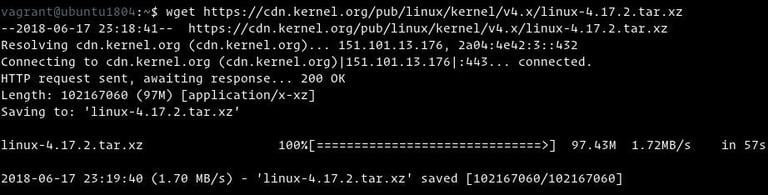

#Wget zero byte file download#

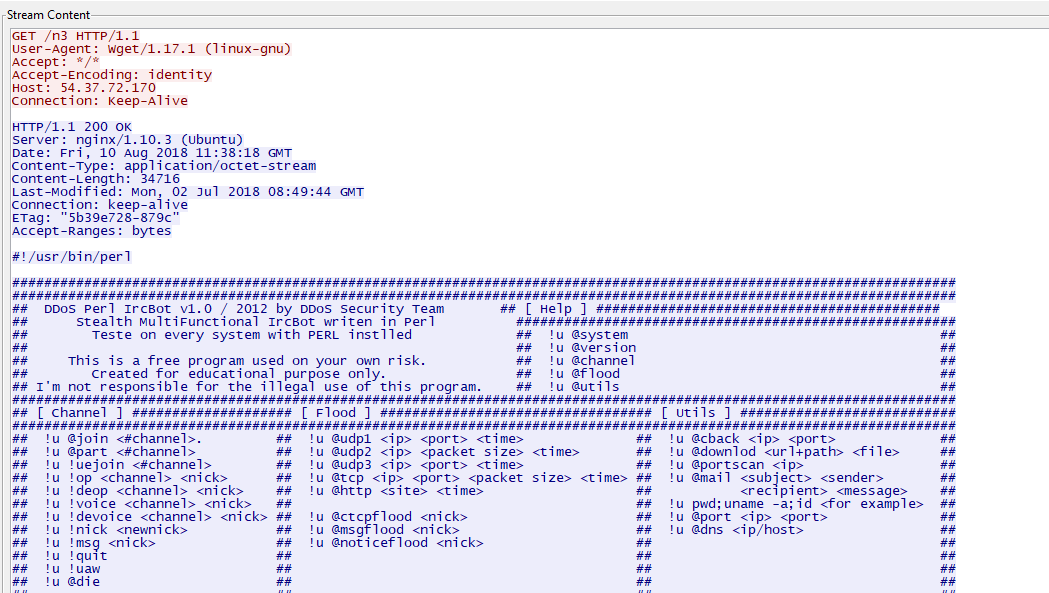

Mon 06:52:46 PM UTC, original submission: Quoteĭuring download with wget I've redirected output into file with the This will be especially true on the very last row, which is likely to have less than 50k so if ~12k is fetched instantaneously because it was already available, the download rate leaps to something huge.Ī discussion should take place on what more appropriate download-rate calculation methods might exist. So, the bytes downloaded for row two will be 50k, but the amount of time taken will be fairly close to zero, resulting in an abnormally high number. Well, the first row will show a download rate of maybe 25k a second, but the next row's data will then already be available in the system buffers, so when Wget tries to fetch it, it will obtain it virtually instantaneously. Consider the case where 20k is downloaded, and then it takes a second or two, but then another 80k is downloaded (each row consisting of 50k). This is calculated by taking how many bytes are represented by this row, and dividing it by the actual time it took to fetch those bytes. That value does not represent how much data was downloaded (which is represented in the left-hand column), but rather the rate (per second) of download corresponding to just that single row. For example, for your case it did not report event "end of chunk", so applications that use etcd watchers could not distinguish border of the messages.So, this appears to be a misunderstanding of what that value is intended to represent, combined with unavoidable shortcomings in how it is calculated. You may ask, if so, why previous implementation did not trigger all that ? I will answer: it had the bugs proven with testcases. For compressed payload we can do nothing, and so behavior will be the same. Possible solution: to make a hack with tracking size of a chunk when no compression involved. Because for compressed payload we can not track size of a chunk - it is the size of compressed data, but we receive from nginx parser uncompressed data. It looks like we may track size of the chunk and don't trigger your app if it is a last subchunk of HTTP chunk. your app receives (0x8000 bytes of data, False).Is there anything else I can contribute to find the error?

The only obvious difference between the working/nonworking environment is the ssl library. It's a webservice with many clients on different platforms (curl, wget, Java, Firefox) and noone else has the problem.

Unfortunately the URL is not publicly reachable. Using the Requests library in the same environment using the same https URL I also get the correct result.

Of course, awaiting response.text() I get only 8184 (or a multiple) bytes of content instead of the expected 111883.Ĭalling the same URL over http I get varying chunk sizes, but never a zero length one before the end, so everything is good. The amount and position of zero byte length chunks is sometimes varying from call to call.